AI Asset Generation – Processing – Application: Reflections

This project resulted in a real-time AR lens, try this on Snapchat:

https://www.snapchat.com/unlock/type=SNAPCODE&uuid=2bb53443e03f47eda5bb5478a29b4bfa&metadata=01

Or on explorer:

https://lens.snap.com/experience/078ebe75-5756-47f1-b5f2-157aed386783

1. Introduction

This project explores the practical use of AI-generated 3D assets within a real-time production pipeline.

With the rapid emergence of AI 3D generation tools, creating models has become increasingly accessible. However, the usability of these assets—especially for specific styles or production needs—remains inconsistent. This project focuses on evaluating current tools, refining generated assets, and integrating them into a working real-time AR experience.

The goal is not only to generate assets, but to understand how they can be transformed into production-ready content.

2. Tool Evaluation for Targeted Generation

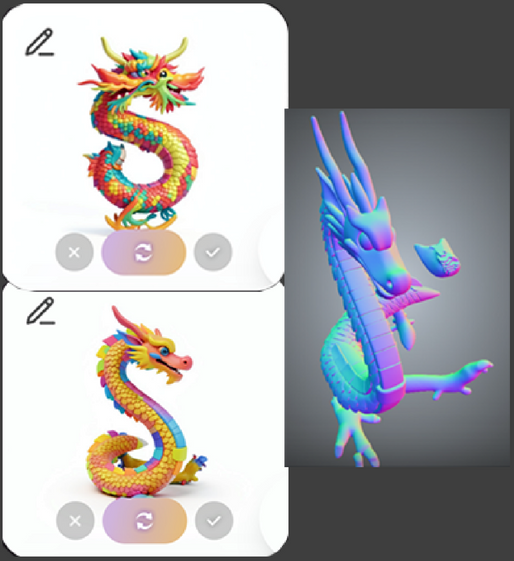

Several current AI-based 3D generation tools were tested, including Meshy, Tripo, Rodin, and Tencent Hunyuan 3D.

This evaluation was conducted with a specific goal: generating a stylized Chinese dragon with culturally accurate features and a structure suitable for rigging and animation.

The assessment focused on three key criteria: structural accuracy, stylistic alignment with the intended cultural reference, and usability for downstream workflows such as rigging and animation.

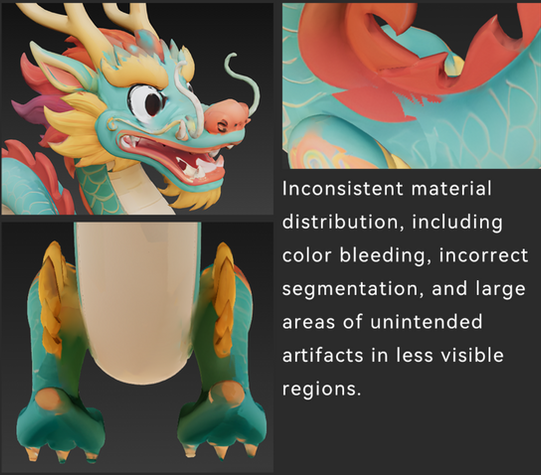

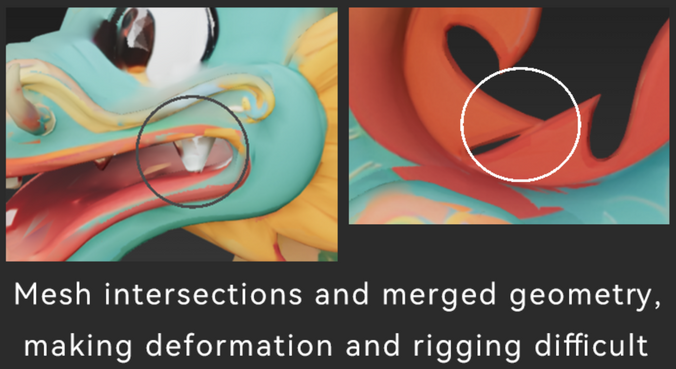

Most tools struggled to meet these requirements. Common issues included distorted proportions, lack of symmetry, incorrect interpretation of Chinese dragon features, and mesh artifacts that limited their usability in production.

Among the tested options, Tencent Hunyuan 3D produced the most usable results. Its outputs showed better alignment with the intended style, more coherent structure, and fewer topological issues, making it a more suitable starting point for further refinement.

3. Limitations of Generated Assets

Although the generated model provided a useful starting point, it lacked the structural consistency, material clarity, and topology required for production use.

As a result, a structured post-processing workflow was necessary to make the asset suitable for real-time applications.

4. Post-processing Pipeline for Production Readiness

① High Poly Reconstruction (ZBrush)

The initial step involved reconstructing the high poly model to correct structural inconsistencies and prepare for further processing.

② Retopology & UV Preparation

Reimport the high poly model into Hunyuan 3D for retopology, generating an optimized low poly mesh (~15K faces) with clean base topology.

Rebuild UVs and transfer base color data to prepare the asset for texturing.

③ Material & Texture Preparation

Rebuild UVs in Maya (lost after retopology), transfer base color from the ZBrush-exported symmetric model, and create additional elements such as eyeballs with proper UVs applied to both high and low poly meshes.

Bake maps from high to low poly and refine materials in Substance Painter, correcting inconsistencies and defining PBR properties for real-time use.

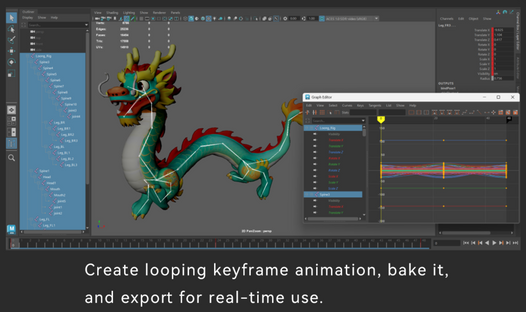

6. Rigging, Animation &

Real-Time Integration

The asset is rigged and animated in Maya, then

deploy in Lens Studio for real-time AR interaction.

7.Conclusion

This project demonstrates that while AI significantly accelerates the initial stage of 3D asset creation, the generated results are not yet production-ready. A structured post-processing pipeline remains essential to ensure structural integrity, material consistency, and usability in real-time environments.

Through this workflow, AI serves effectively as a starting point rather than a complete solution. Its value lies in reducing early production time, while traditional tools and manual refinement are still required to achieve final quality.

The project also highlights the importance of controllability in AI-driven asset generation, especially when dealing with culturally specific designs such as a stylized Chinese dragon.

Improving precision and consistency in this area remains a key direction for future workflows.